Processing Tech

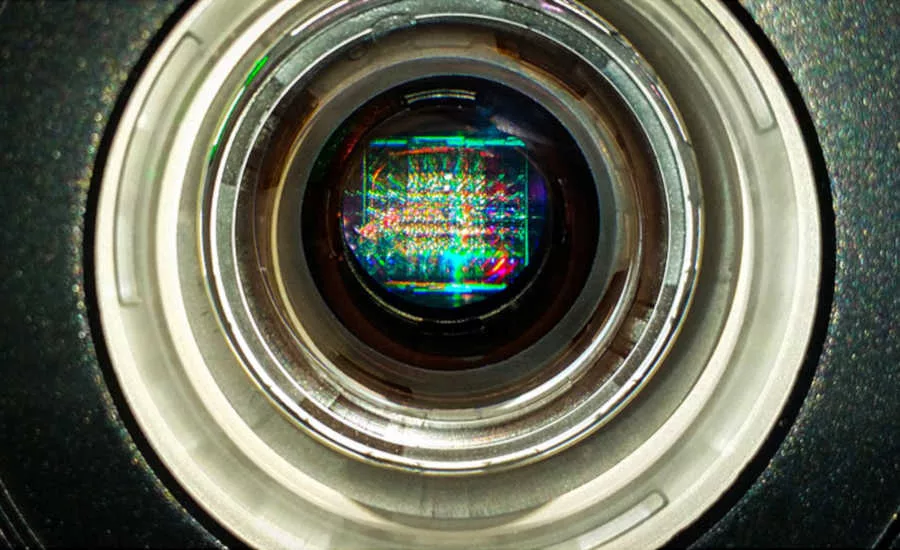

Vision system technology advances food safety and quality assurance

A view of a safer future

As the Food and Drug Administration (FDA) tightens its surveillance of food processors, regulatory officials have a heightened awareness for any type of food-safety hazard, says Donna Schaffner, associate director of food safety, quality assurance and training for Rutgers Food Innovation Center, in Bridgeton, N.J.

This is not surprising because during the first half of 2019, the single largest cause of food-safety recalls was plastic foreign material in food products. Several large meat and poultry processors experienced huge recalls this year, because of finds of plastic pieces, she says.

“Due to the fact that metal detectors obviously cannot find plastic and most X-ray systems are not able to detect small pieces of plastic, vision systems could become much more popular in the future, because of this reason,” Schaffner says.

For example, if a product was compromised early in processing by a colored plastic material, it is possible the contaminants in the finished products would be more easily and quickly detected by a visual scanner set to look for the different-colored inclusions. Hence, in addition to aiding in food safety, vision systems could save processors money by avoiding whole shifts of finished product to be discarded, Schaffner says.

“It would be simple for food processors to move to a practice of only using different colored plastic, such as dark blue, for zip ties, product-contact plastic bags, gloves, plastic sleeves and hairnets/beard nets, etc., and then sending finished meat or poultry products through a vision system that is set to look for the different color, and thus finding plastic inclusions much more efficiently than human observation,” she explains.

Robots than can ‘see’

While Georgia Tech Research Institute (GTRI), in Atlanta, has historically conducted testing in the processing plant looking at product quality, the institute is conducting vision system research in the animal environment with a focus on animal welfare. For example, GTRI has placed vision systems on robotics in houses to characterize the natural behaviors of the chickens. The vision systems are able to provide quantitative metrics for animal welfare. At the same time, the systems are looking at what types of things vision systems can sense in the animals’ environments to try to make improvements.

The vision systems also are capable of recording the sizes of chickens. For example, chicken sizes typically fit under a bell curve, but the more the chicken’s size is outside the curve, the more processing issues it could cause.

“If we can do a better job sensing and providing the right information to the farmers, they can do a better job of making more of the birds that fit under that need,” explains Colin Usher, GTRI’s senior research scientist. “A tighter standard deviation on that bell curve in the sizes of the chickens means less problems when it comes time to processing, less yield loss, less left on the frames and things like that.”

GTRI’s vision systems are working inside a robotic system and are capable of picking up eggs and mortality from the poultry houses.

— Elizabeth Fuhrman

Vision systems advance

In addition to vision systems boosting food safety, processors are using vision systems for standard processing procedures such as monitoring label registration along with missed and faulty packaging to help with quality assurance.

Continued advancement in the processing capability of vision systems allows processors to apply more advanced algorithms. While this technology used to be too computationally intensive or cost too much money, vision systems are coming down in price and are more readily available, says Colin Usher, senior research scientist for Georgia Tech Research Institute, in Atlanta.

“You’re seeing more enhanced ability in the vision systems per dollar,” he explains.

Advances in hyperspectral imaging also have made it possible for processors to use this technology for foreign-material detection in product streams, both in the processing and packaging process, Usher says. In addition, hyperspectral-imaging technology has the potential to assess freshness in the product, he says.

While hyperspectral imaging is typically too slow to do in real-time online along with being extremely expensive, the industry is starting to see real-time systems released. A hyperspectral camera could cost $100,000 just for the camera and 30 seconds to take an image, but new systems that compute online are developing. Usher thinks this will open up opportunities for processors. For example, processors could tell on a molecular level what comprises a particular chicken breast, such as how much fat content or possibly something about the nutrition, value, tenderness and taste along with product quality, Usher explains.

While vision systems used to be cost prohibitive for many processors, the more attractive price point of vision systems is leading the industry to adopt some of the systems while not necessarily embracing the cutting-edge technology, Usher says.

One of the challenges for vision systems in the food-processing environment is the physical requirements for systems that are subject to high-pressure wash down.

“They’re challenging from a hardware perspective to install these systems,” Usher says. “It used to be in a lot of cases, the electronics cost a ton and they were just expensive systems. Well, now what you’re finding is that the electronics are pretty cheap, but the actual enclosures and everything that’s required to put them in the environment is a significant cost.”

One area where technology could help processors is in cloud computing, which offers more advanced computations, Usher says. While more processors are allowing more processing to be done online, many are still hesitant about using the cloud or sending information to an external source.

“It’s a little bit of a tradeoff,” he explains. “ … They don’t want to give their give their data away or send it over the Internet, so to speak, but there’s a lot of potential there for applying some really cutting-edge machine learning and (artificial intelligence) AI for some of these products. If the industries would open up and be more accepting of doing stuff on the cloud, they could probably see a big benefit in some of the things that they’re doing there.”

Moving forward, Usher expects to see a continued trend of falling vision system pricing. More expensive systems featuring machine learning and AI will continue to be released. Additionally, cloud computing systems in which the sensors are online or the processing is done in some data warehouse owned by a service company that’s running it will continue to hit the market as well, Usher says. NP

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!